AI assistants can generate fluent, confident responses, but fluency isn't the same as usefulness. A response can be factually correct and still miss the point. It can sound helpful without solving the real problem.

Models need human feedback to learn the difference between answering a question and actually helping someone. That judgment requires your input.

The Conversation Response Rating Quest is now live on Perle Labs! Visit app.perle.xyz to start earning points.

What This Task Is

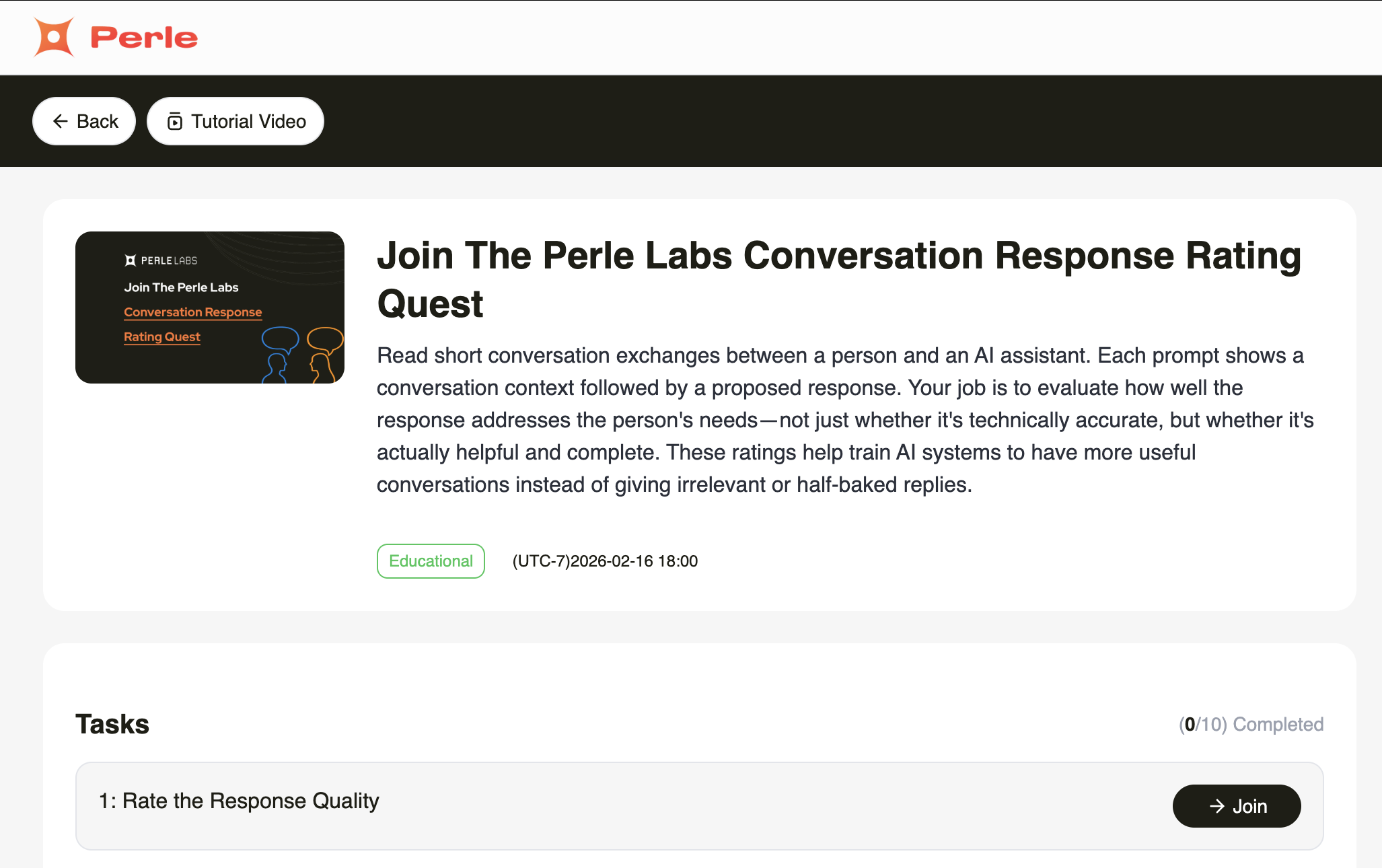

The Conversation Response Rating Quest is a set of 30 multiple choice tasks. Each prompt presents a short conversation between a person and an AI assistant; you'll see the user's question and a proposed response.

Your job is to rate how well the response actually helps the person. Not just accuracy, but usefulness and completeness. These ratings help train AI to give better, more helpful replies.

What You'll Do

Step 1: Read the conversation context and the proposed response.

Step 2: Evaluate how well the response serves the person's actual needs.

Step 3: Consider: Does it answer what they asked? Is it complete? Would a real person find it helpful?

Step 4: Select the rating that best matches the response quality and submit.

Choose the single most accurate rating for each response.

Rating Options

- Helpful: Clearly answers the question with relevant and complete information

- Off-Topic: Misunderstands or does not address the question

- Correct but Unhelpful: Factually accurate but doesn't solve the real need

- Incomplete: Partially answers but misses key details

Why It Matters

By contributing to this task, you are:

- Teaching AI to distinguish between accurate responses and genuinely helpful ones

- Building enterprise-grade datasets for assistant and support system improvement

- Improving how AI understands user intent and delivers complete answers

- Contributing to the sovereign data layer that enterprises and institutions require

This is judgment work that AI cannot learn alone. The human layer that makes assistant systems genuinely useful.

Task Details

- Points per question: 100

- Number of tasks: 30

- Total points available: 3,000

- Project eligibility: 1,000 points

Visit the app today to start earning points!

Ready to Get Started?

The Conversation Response Rating Quest is now live on your Perle Labs dashboard: http://app.perle.xyz

Each rating you submit helps build auditable AI infrastructure for systems where "close enough" isn't good enough — not black-box pipelines, but human-verified data with a clear chain of custody.

Stay Connected

Whether you’re returning from earlier this season or joining for the first time, Season 1 is open and ready! And we're just getting started. Follow along with us on X, Discord, and Telegram to stay connected so you don’t miss what’s coming.

Human-verified. Auditable. Sovereign. Start contributing.

.jpg)